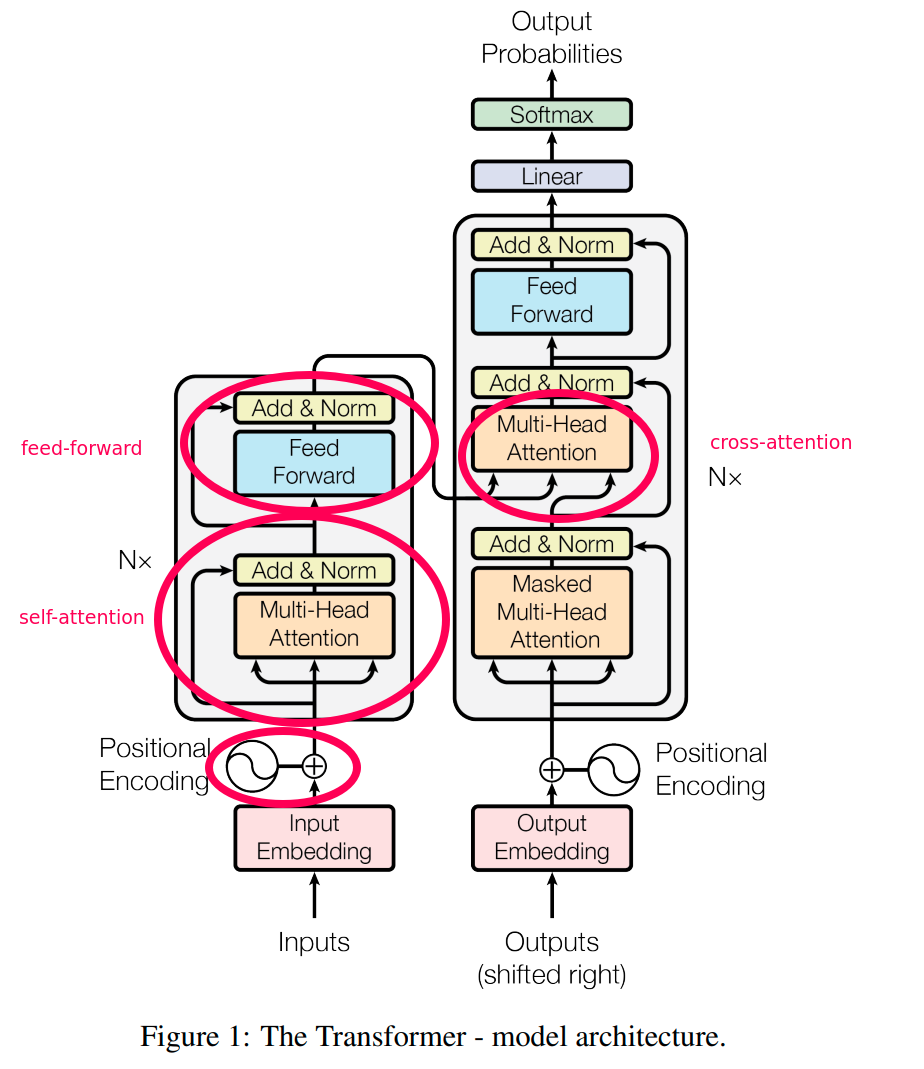

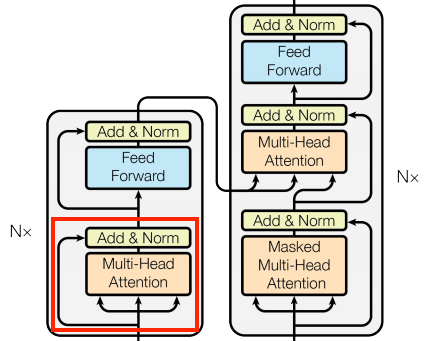

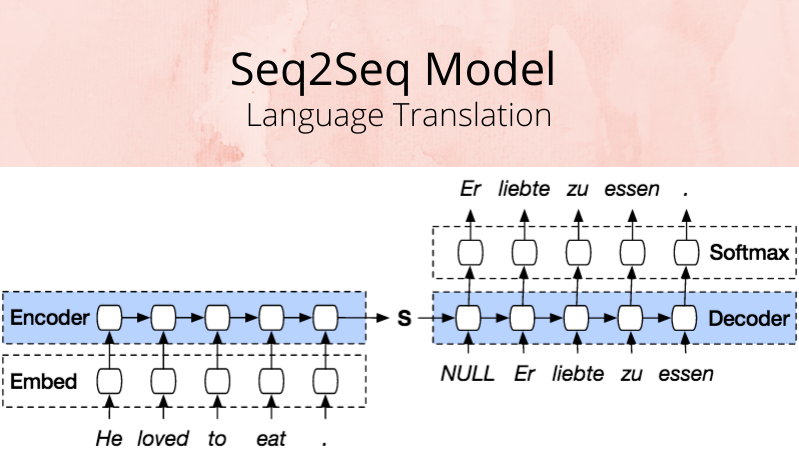

Transformer model architecture (this figure's left and right halves... | Download Scientific Diagram

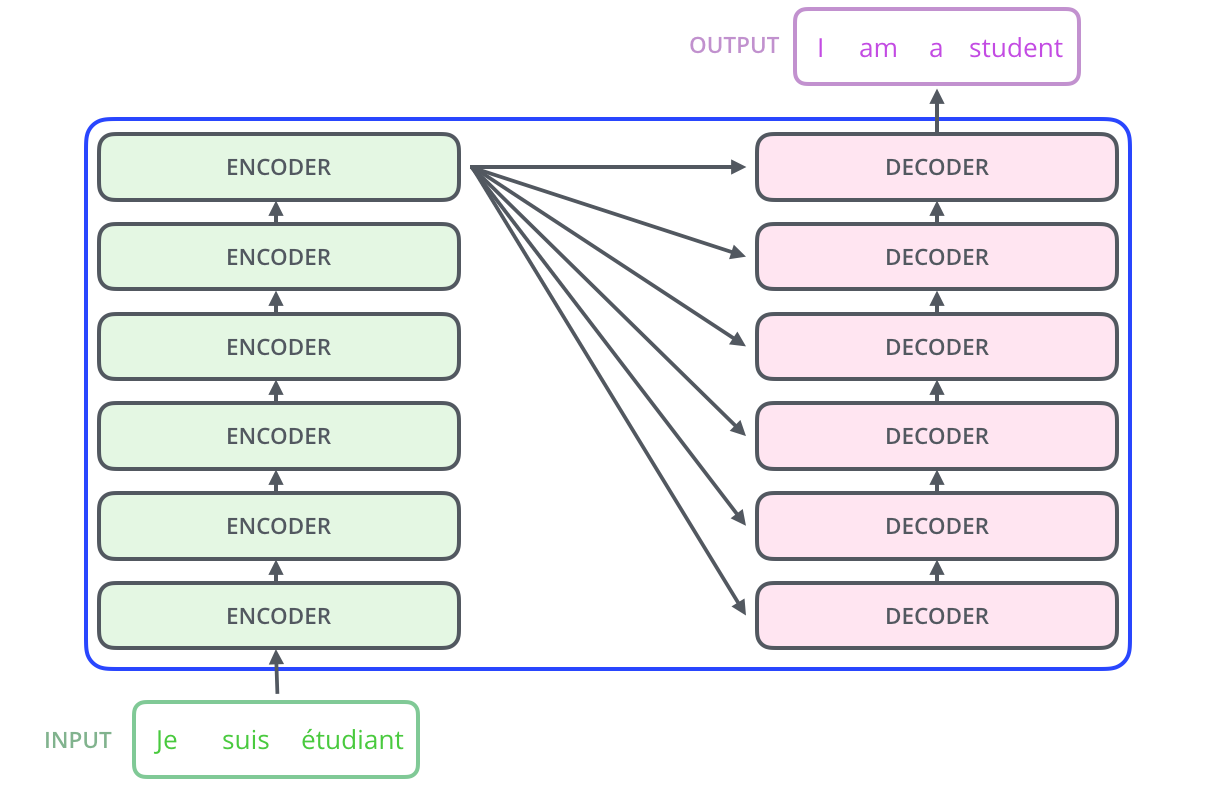

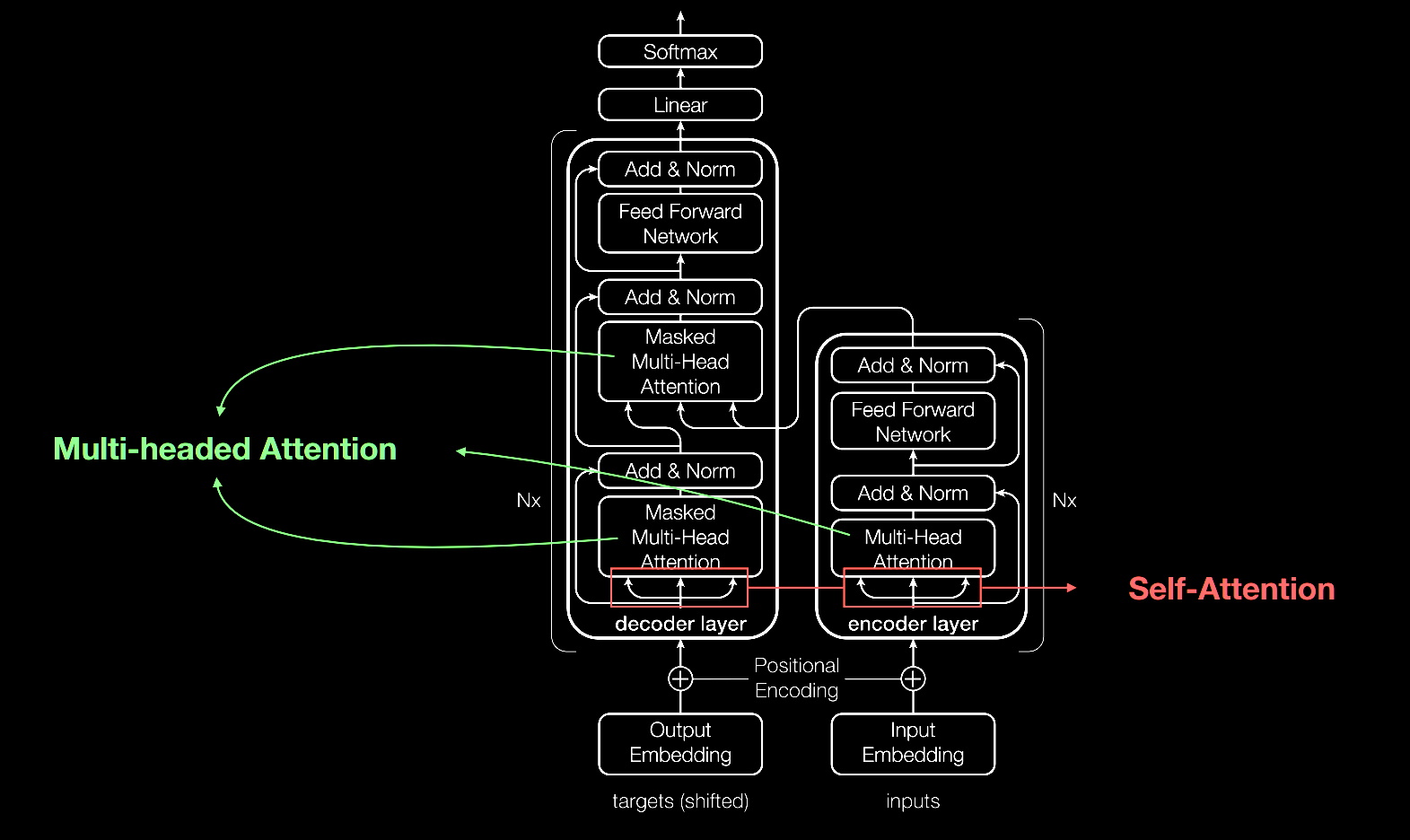

BiLSTM based NMT architecture. 2) Transformer -Self Attention based... | Download Scientific Diagram

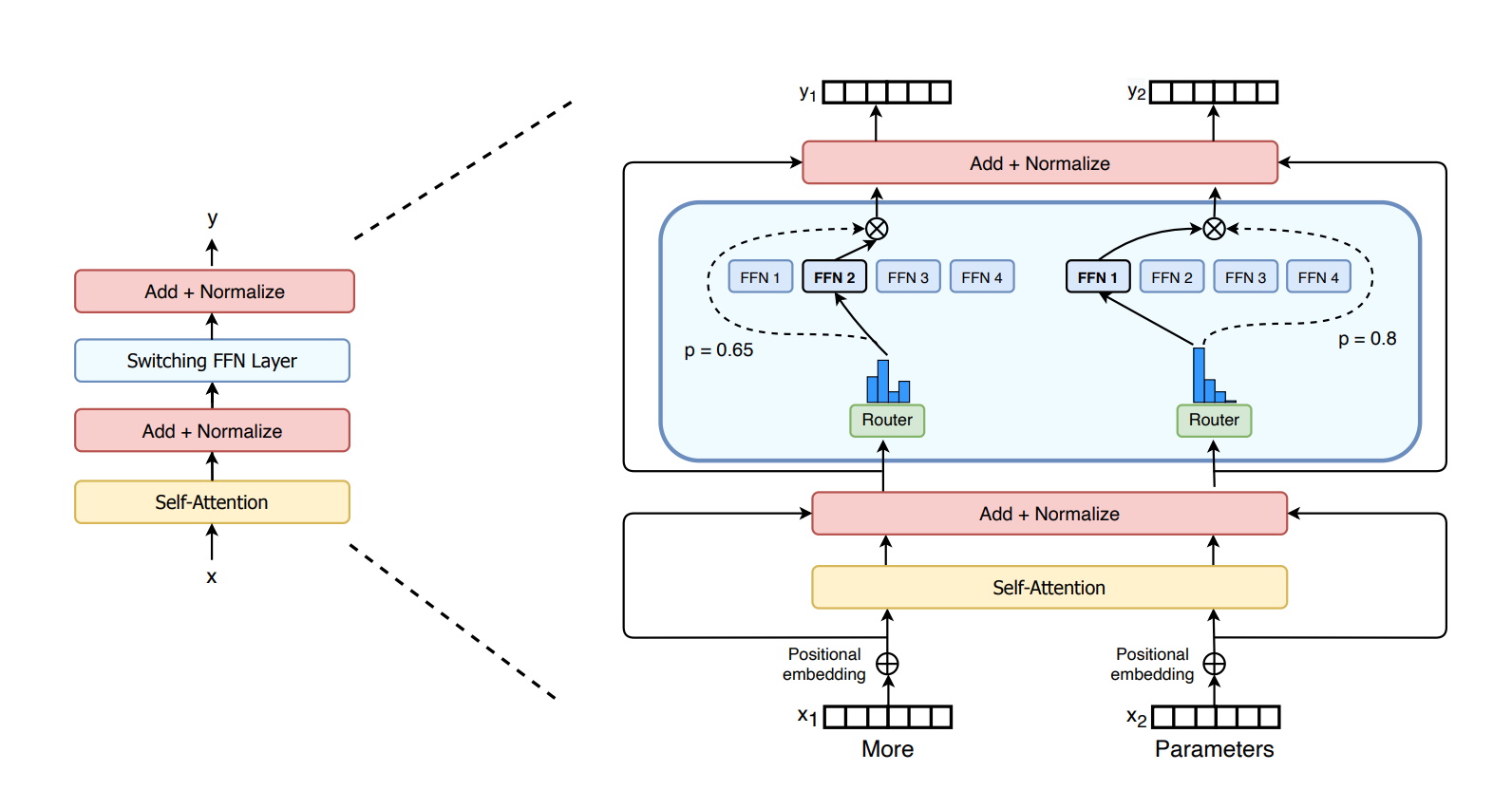

Make Every feature Binary: A 135B parameter sparse neural network for massively improved search relevance - Microsoft Research

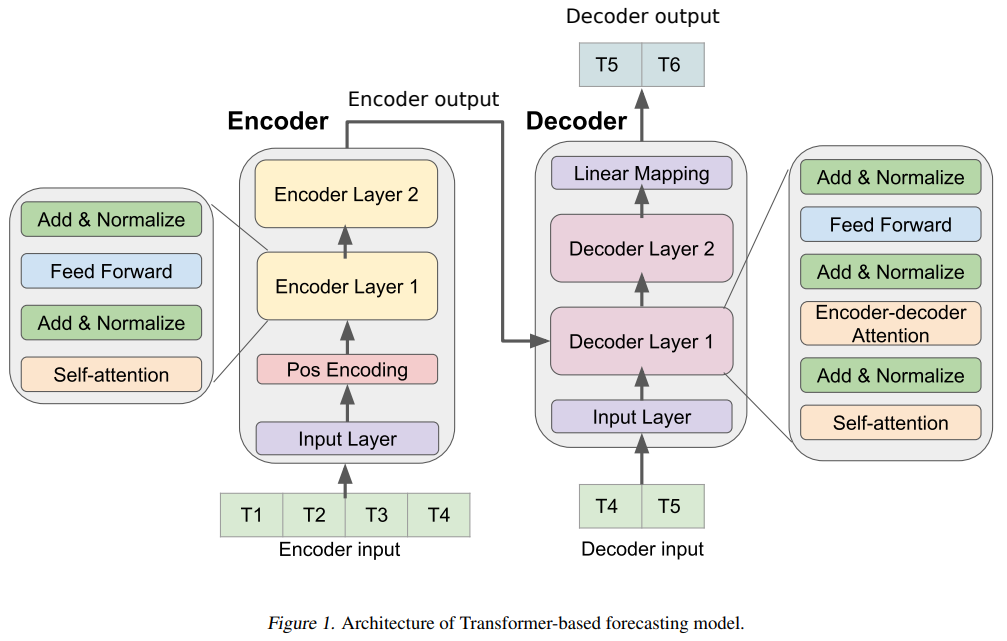

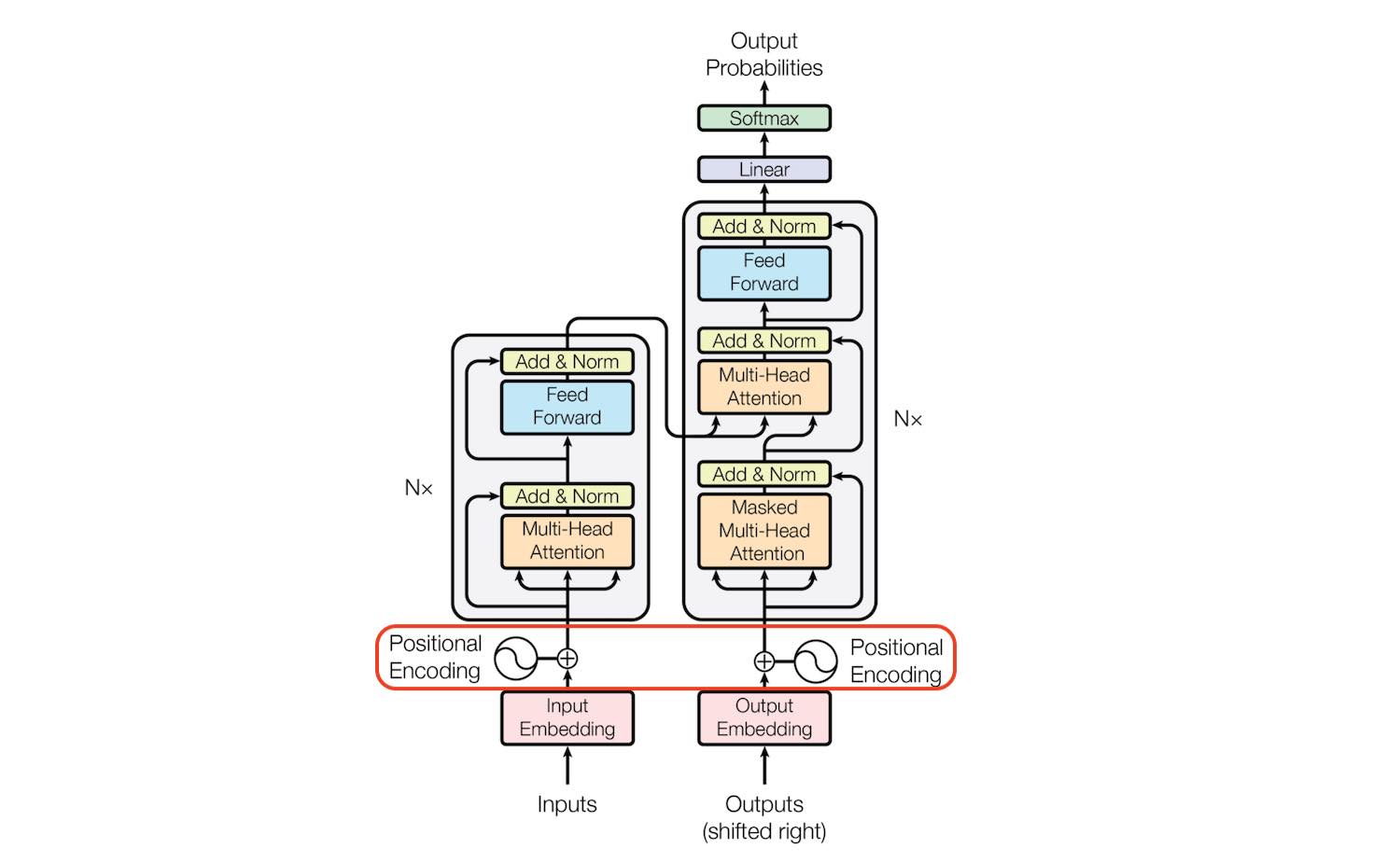

Transformer machine learning language model for auto-alignment of long-term and short-term plans in construction - ScienceDirect

Warsaw U, OpenAI and Google's Hourglass Hierarchical Transformer Model Outperforms Transformer Baselines | Synced

Microsoft Improves Transformer Stability to Successfully Scale Extremely Deep Models to 1000 Layers | Synced

![Transformer Model Architecture. Transformer Architecture [26] is... | Download Scientific Diagram Transformer Model Architecture. Transformer Architecture [26] is... | Download Scientific Diagram](https://www.researchgate.net/publication/342045332/figure/fig2/AS:900500283215874@1591707406300/Transformer-Model-Architecture-Transformer-Architecture-26-is-parallelized-for-seq2seq.png)